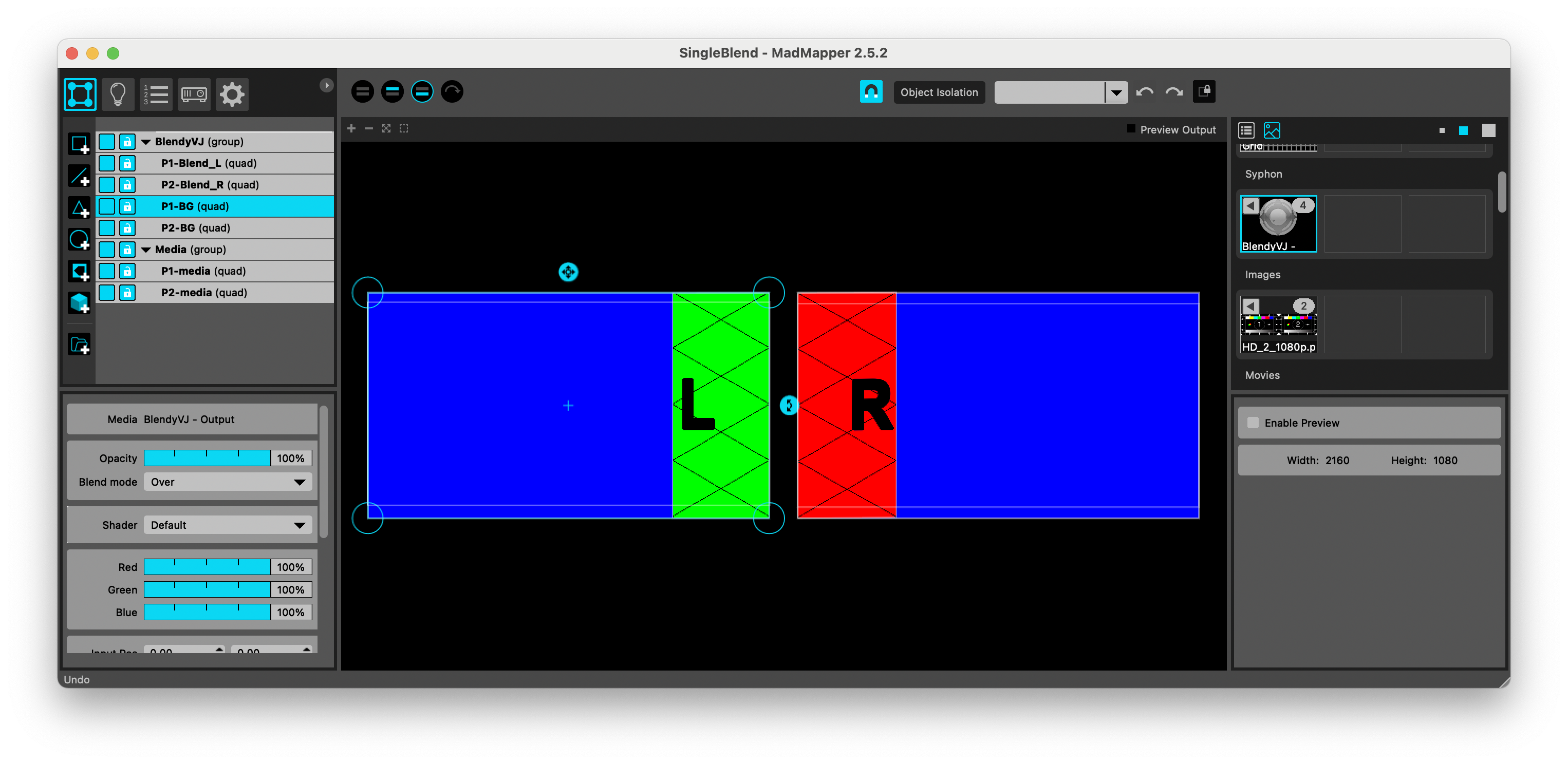

#Madmapper edge blending software

The text, starting from a quick overview of the current technologies that are laying the foundations for those of the future, addresses the advanced techniques of surface acquisition and then move on to the theme of modeling and MadMapper, currently the only software with specific functions for video mapping. SAR offers in this sector great unexplored opportunities, especially if you think of its hybridization with traditional museographic systems or with more futuristic mixed reality systems it is often configured as the best action to be interpreted to communicate the past as it frees the user from the brightness of other systems. Option 2: You calculate the pixel size of your projection area which in this case would be (768/4*9.5) x 768 or and create a 1824x768 comp knowing that your blend area will be 224 pixels wide.This volume is intended to be an exhaustive guide for all those who, already masters the basic techniques of video mapping, want to deepen the subject and in particular want to focus attention on the Spatial Augmented Reality (SAR) applied to cultural heritage, whether artistic, archaeological or museological. Option 1: You create a 2048x768 comp and adjust the slices' input area on the fly to match your projection area knowing that you'll have a 224x768 pixel area that won't be used so if you center your output, you'll end up with 112x768 unused areas on L & R.If you have 2 XGA projectors (1024x768) that you're blending on a screen that's 9.5M x 4M there would be two main approaches: To economize resources, I think people should really get used to sizing their comps to match their actual projection area rather that creating comps that are the sum of the projector m83nyc, since you're pretty new to blending, let me explain what I mean. This tends to give you more freedom during performance, for instance when you realise half way through you want to add more layers.Įach technique can easily be adjusted to use separate balls for each object, just add a layer for each ball. I will use an effect like Tile, Iterate, Slide or Hive8's 4 Screen Duplicator effect to position the ball in the composition four times, and then uses slices to warp that exactly to fit. This tends to be generally easier and quicker to set up. Oaktown will route the layer with the ball to four slices, hide it from the comp and then position and warp the slices in the output. Guys I seriously really appreciate your help ! Very thankful Is the way I am doing this correct for this type of stage setup i want to do or is there a better way ?

How would a assign a video layer to this well main the background behind the 3d objects ? Get this and this video is key thank you. Virtual screen 2 = would be for the edge blend of both projectors. Or how would I assign each mask/map 3d ball thing its own layer video ? Questions for this one is = how can assign one of my layers of videos in resolume to output to this Virtual screen objects as a whole? like in the video oaktown posted the same video layer is going on the four 3d ball objects ? ? This makes total sense very straight forward. Virtual screen 1 = my canvas for mapping and masking. I guess in a more scaled out way you are saying that. You guys seem to know exactly what I am looking to do here. Wow thanks for the input !!! this makes sense but dose not but I do understand.